Learning Paths

Last Updated: May 2, 2026 at 10:00

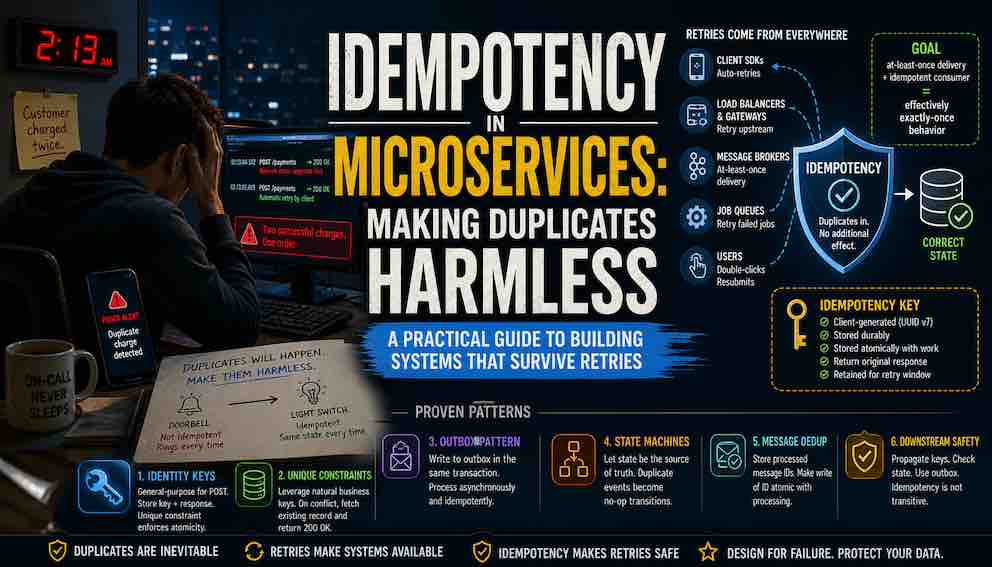

Idempotency in Microservices: Making Duplicates Harmless

A practical guide to building systems that survive retries

Retries are not edge cases in distributed systems—they are the default, and they inevitably create duplicate requests. Without idempotency, those duplicates turn harmless network failures into real data corruption: double charges, duplicate orders, inconsistent state. This guide explains how idempotency works in practice, from API design (PUT vs POST) to patterns like idempotency keys, database constraints, state machines, and the outbox pattern. By the end, you’ll understand how to design systems where retries are safe—and duplicates simply don’t matter.

The 2:13 AM call

It's 2:13 AM. Your phone buzzes. A customer placed one order and was charged twice.

You open the logs. Here's what you find:

Request one: POST /payments → 200 OK — but the response packet was dropped by a flaky network switch before reaching the client

Request two: Same request, automatically retried by the client SDK 500 milliseconds later → another 200 OK

Two successful charges. One order. One very angry customer.

This is not a bug in the traditional sense. The network failed. The client did exactly what it was supposed to do — retry an apparently failed request. Your system did exactly what it was built to do — process each request as it arrived.

The problem is that your system was built assuming it would only be asked once.

That assumption is wrong in distributed systems. It has always been wrong. And idempotency is the design discipline that corrects it.

What idempotency actually means

The formal definition is simple: an operation is idempotent if performing it multiple times produces the same result as performing it once.

What kind of operation? Any state-changing action: an API call, an event consumed from a queue, a background job, a webhook handler, or a scheduled cron job.

And what are we duplicating? The same instruction arriving multiple times. The same API request sent twice after a timeout. The same Kafka message delivered again after a rebalance. The same webhook payload resent because the first response was lost.

Your APIs, event handlers, and job processors need to be idempotent.

The critical insight: idempotency does not prevent duplicates — it makes them harmless. Duplicates will arrive. The only question is whether your system corrupts data when they do.

HTTP semantics: what the spec already tells you

The HTTP specification classifies methods as safe and idempotent.

Safe methods — GET, HEAD, OPTIONS — have no side effects. You can call them a thousand times and nothing changes.

Idempotent methods — PUT and DELETE — can be retried because repeating them should leave the server in the same state after the first request.

POST is neither safe nor idempotent by definition.

But here is where some engineers get tripped up: the spec doesn't make your system idempotent. Your implementation does.

A PUT endpoint is only idempotent if you actually implement it that way. If your PUT appends to a log, increments a counter, or sends a notification on every call, it violates the contract. The spec declares intent. Your code must deliver the behavior.

The same applies to DELETE. Calling DELETE twice should return the same result — usually 200 OK or 404 Not Found — and should never delete the same resource twice. That's your job to implement.

GET is idempotent only because it has no side effects. The moment your GET endpoint increments a view count or logs access for billing, it breaks idempotency and, more seriously, breaks the safe method contract.

Understanding the spec matters because it sets expectations for anyone calling your API. But never assume that using PUT or DELETE automatically makes you safe. The spec is a promise you must keep.

Where retries actually come from

Engineers tend to think of retries as the explicit retry(3) calls they write themselves. The reality is that retries emerge from every layer of a distributed system, often invisibly:

Client SDKs ship with retry logic enabled by default. Your mobile app, your internal service clients, even curl --retry — they all retry on timeouts and 5xx responses without any code you wrote.

API gateways and load balancers retry failed upstream connections. A single client request can arrive at your service twice because the gateway decided the first upstream attempt was too slow.

Message brokers guarantee at-least-once delivery. Kafka replays messages during consumer group rebalances and after uncommitted offsets. SQS standard queues redeliver messages that weren't deleted within the visibility timeout. Your consumer will see duplicates — not in exotic failure scenarios, but in normal operations like deployments.

Background job queues retry failed jobs. A job that timed out but actually completed will run again.

Users double-click submit buttons. Mobile apps resubmit when connections drop. Your own load tests replay traffic.

Duplicates are not edge cases. They are guaranteed consequences of the mechanisms we use to make systems reliable. Every retry policy, every timeout, every broker redelivery is a potential duplicate. Design for this from the start.

Why Idempotency Often Falls Short (and Why That Matters in Production)

Here is a pattern worth noticing: idempotency tends to get added for payments. Maybe for orders. But for everything else, there is often an assumption that duplicates won't happen—or won't matter.

That assumption holds—until it doesn't.

Duplicates often go unnoticed under normal conditions. A system runs for months without incident. Then one day: a network partition, a misconfigured gateway, a client SDK change, a job that crashes and restarts. Suddenly, the same operation runs twice in places you never considered.

That logging endpoint that increments a counter? It now overcounts.

That PUT /users/123/status that sends a notification? It now sends two.

That deletion endpoint tied to billing or quotas? It now charges or deducts twice.

The pattern is simple: any unguarded side effect will execute again if the operation runs again. And side effects are everywhere—database writes, external API calls, message publications, emails, analytics updates.

Here are two critical points worth examining.

First, PUT and DELETE are only idempotent if your implementation is. A PUT that triggers a notification on every call is not idempotent. A DELETE that emits an event or increments a counter on every execution is not idempotent. The specification sets an expectation. Your code has to uphold it.

Second, the need for idempotency is not determined by the HTTP method—it is determined by the cost of duplication.

A retried POST /payments clearly needs protection. But what about POST /analytics/event? Under normal conditions, a duplicate might not matter. But if that analytics data later feeds billing, quotas, or user limits, a duplicate is no longer harmless.

The real question is not "Is this POST or PUT?"

It is: "What happens if this runs twice?"

So what does systematic idempotency look like?

It means treating idempotency as a design concern, not an afterthought:

- For every state-changing operation, ask: “If this runs twice, what breaks?”

- For POST endpoints, use idempotency keys or design the operation around a client-supplied identifier

- For PUT and DELETE, audit side effects so they do not trigger twice

- For message consumers, implement deduplication or idempotent processing

- For background jobs, ensure work units can be safely retried

This is work. There’s no way around it.

But the work is front-loaded. The alternative is debugging data corruption under pressure—trying to explain how something that “should only happen once” happened twice.

Not every operation needs idempotency. Pure reads don’t. Some analytics and logging pipelines tolerate duplicates by design.

But for any operation where duplication causes real harm—financial, operational, or user-facing—you need to assume it will happen.

Not because it happens every day.

But because, eventually, it will happen once—and once is enough.

Idempotency vs. deduplication vs. exactly-once

These three terms are frequently conflated. They represent different strategies for solving the same underlying problem: how do you prevent duplicate processing from causing duplicate side effects?

All three exist because distributed systems generate duplicates. Retries, rebalances, timeouts, and network failures mean the same instruction can arrive multiple times. Each of these strategies answers the question "what do we do about that?" differently.

Deduplication tries to stop duplicates at the door. You detect an incoming request as a repeat and discard it before it triggers any side effects. When it works, the system behaves as if the duplicate never arrived.

The challenge is that deduplication requires remembering what you've already seen — and that memory must be durable, consistent, and available across failures. At scale, maintaining that reliably becomes non-trivial. But when you need to guarantee that a side effect absolutely never happens twice (e.g., a one-time coupon code redemption), deduplication is the right tool.

Idempotency takes a different approach. It allows duplicates to arrive but ensures they cause no additional effect. You may or may not explicitly detect the duplicate; what matters is that processing the same operation multiple times results in the same final state as processing it once. The duplicate is not prevented — it is neutralized.

This is lighter-weight than deduplication because you don't need perfect detection. You just need the operation itself to be harmless when repeated. That is why idempotency is the more common pattern.

Exactly-once processing is the guarantee that an operation's effects occur exactly once. In theory, this is the ideal. In practice, achieving true end-to-end exactly-once semantics in distributed systems requires coordination across components — often involving consensus protocols or tightly coupled state management — which introduces latency, complexity, and availability trade-offs. For most systems, this is either too expensive or unnecessary.

A more practical model for real systems:

At-least-once delivery + idempotent consumer = effectively exactly-once behavior

Your message broker may deliver messages more than once — that's at-least-once delivery. Your consumer processes duplicates safely — that's idempotency. Together, they produce the same observable outcome as exactly-once processing, without requiring global coordination.

The common thread

All three strategies exist because duplicate side effects are the problem. Deduplication prevents duplicates from reaching the side effect. Idempotency makes duplicate side effects harmless. Exactly-once tries to prevent duplicates from occurring at all.

Choose based on your tolerance for complexity, your need for perfect prevention versus harmless repetition, and whether your side effects can be made idempotent in the first place.

Implementation patterns for idempotency

Pattern 1: Idempotency keys

How it achieves idempotency: The server remembers the result of the first operation using a client-provided unique key. When a duplicate request arrives with the same key, the server returns the stored result instead of executing the operation again. The duplicate is never executed.

Implementation details: The client generates a unique key and sends it in a request header. Idempotency-Key is the conventional name, used by Stripe, PayPal, and others.

The server stores the key alongside the response from the first successful execution. On subsequent requests with the same key, it returns the stored response without re-executing the operation.

Critical requirements:

Storage must be durable. An in-memory store loses all keys on restart. A delayed retry arriving after a deployment would be processed as new. Persist keys to a database table or a Redis instance with persistence enabled.

The retention window matters. Keep keys for at least as long as your maximum retry window. Shorter, and a legitimately delayed retry becomes a duplicate. Longer, and you accumulate unbounded storage that needs a cleanup job.

Atomicity is critical. The key must be stored in the same atomic operation as the work it guards. If you check the key store, perform the operation, and then store the key in three separate steps, a failure between steps two and three leaves you with completed work and no key — the next retry processes the operation again. The key write and the operation must succeed or fail together.

In practice: if your operation touches a relational database, store the idempotency key in the same database in the same transaction. A unique constraint on the key column enforces this naturally.

Who generates the key? This is a client-side responsibility. The client must generate a new key per logical operation and must reuse that same key across all retries of that operation. If the client generates a fresh key on each retry, idempotency is completely defeated.

Pattern 2: Database unique constraints

How it achieves idempotency: The database itself prevents duplicate writes. If the same logical operation tries to insert the same data twice, the unique constraint rejects the second insert. The duplicate is prevented at the storage layer.

Implementation details: Create a unique constraint on natural business keys. For an orders table, that might be (customer_id, cart_id). The database will reject a second insert for the same cart.

The crucial detail is what you do when the constraint fires. One thing that comes to mind is to return a 409 Conflict. This breaks idempotency. The client retried expecting the same response it would have received the first time, and instead got an error — now it doesn't know whether the operation succeeded or failed.

The correct response: catch the constraint violation, fetch the existing record, and return 200 OK with that record. The desired state is already true. The duplicate request succeeded in the only sense that matters.

How this handles race conditions: If two identical requests arrive simultaneously, both will pass any application-level read check and attempt the insert. The unique constraint catches this — exactly one insert succeeds, the other throws a constraint violation which you handle as above. This is why the constraint is the enforcement mechanism, not an application-level read-before-write check.

Pattern 3: State machines

How it achieves idempotency: The system's current state tells you whether an operation has already happened. Processing a duplicate operation checks the state, sees that the work is already done, and exits without taking action.

Implementation details: An order moves through states: PENDING → PAID → FULFILLING → SHIPPED → DELIVERED.

Your payment processor sends a webhook notifying you that the customer's payment succeeded. Your handler looks up the order and checks its current state:

- If the order is PENDING, this is the first successful payment notification. You transition the order to PAID, release inventory, and send a confirmation email.

- If the order is already PAID or beyond, this is a duplicate notification. You do nothing and return success.

Now when the duplicate webhook arrives — because payment processors retry until they receive a successful response — the order is already in PAID. Your handler does nothing. The duplicate is harmless.

The power of this pattern: You don't need a separate idempotency key store or a deduplication table. The state itself is the record of what has happened. The system naturally rejects operations that would move it backward or repeat a completed transition.

Where this pattern shines: Event-driven workflows, webhook handlers, approval processes, and any multi-step operation where each transition is one-way. You never need to go from SHIPPED back to PAID, so the state machine enforces correctness and idempotency simultaneously.

The limitation: State machines require the transition logic to be explicit and the state to be stored durably. State kept only in memory — a flag that says "email sent" but isn't persisted to the database — gives you nothing to check against when a duplicate arrives after a restart. Every state check must read from durable storage.

Pattern 4: Inbox pattern (for message consumers)

How it achieves idempotency: The consumer stores the ID of every message it has successfully processed. When a duplicate message arrives (due to broker re-delivery), the consumer checks its inbox, sees the ID already exists, and skips processing. The duplicate is ignored.

Implementation details: Before processing a message, check an inbox table for the message ID. If it exists, skip processing and acknowledge the message. If not, process the message and insert its ID into the inbox table within the same database transaction as any state changes.

The atomicity requirement is critical. If your operation updates a database, the inbox insert and the business data update must happen in the same transaction. If the transaction commits, the message ID is stored — future duplicates will be skipped. If it fails, both roll back — the next delivery will process the message again.

A concrete example: Your order service consumes a "payment_succeeded" event from Kafka. The message has a unique ID. Your consumer opens a database transaction, checks the inbox table, finds nothing, updates the order status to "paid", inserts the message ID into the inbox table, and commits. Then it acknowledges the message to Kafka.

If the consumer crashes after the database commit but before the acknowledgement, Kafka redelivers the message. Your consumer checks the inbox, finds the ID already present, skips processing, and acknowledges the message. The order status is not updated again.

This pattern is explained in detail in the transaction inbox article.

A note on naming: This is the inbox pattern. Do not confuse it with the outbox pattern, which solves a different problem: reliably sending messages by storing outgoing messages in a database transaction before forwarding them to a broker.

The race condition that breaks naive implementations

This deserves its own section because it silently invalidates many idempotency implementations — and it applies across nearly every pattern we just discussed.

The scenario: Two identical requests arrive nearly simultaneously. A client bug. A load balancer duplicate. A retry so fast the original is still in flight.

With a naive read-before-write check:

- Request A reads the store. Key not found(i.e. confirmation that the request has not been processed before). Proceeds.

- Request B reads the store, milliseconds later. Key still not found — A hasn't written yet. Proceeds.

Both process. Both charge the card.

This same race appears in every pattern:

- Idempotency keys: Two requests with the same key both pass the "not found" check.

- Inbox/deduplication: Two consumer instances both check before either writes the message ID.

- Database constraints alone: Checking first, then inserting, leaves the race window open.

The fix is the same: Make the insert the enforcement mechanism, not the check. Both requests try to insert the key into a table with a unique constraint. Exactly one succeeds. The other gets a constraint violation. The constraint is the gate, not the read.

Why storage choice matters: This is why idempotency stores are often relational databases rather than caches. You need ACID transactions and unique constraints. Redis can work if you accept a narrow race window during failover. For payments? Use the database.

The bottom line: If your implementation reads first, decides, then writes — you have a race condition. It may be dormant. But it is waiting.

Idempotency is not transitive

Your system can be perfectly idempotent and still cause harm, because your dependencies may not be.

If your idempotent POST /payments endpoint calls a payment gateway without an idempotency key, a retry from your service sends two charge requests to the gateway. Your idempotency logic never fires — it only runs on incoming requests from your clients, not on outgoing requests to your dependencies.

Every external call in an idempotent operation must itself be idempotent. This means:

- Propagate the client's idempotency key to downstream services that support it (Stripe, PayPal, Twilio, and most major APIs do)

- For services that don't support idempotency keys, check state before calling: "Did we already send this SMS?" If the answer is yes, skip the call

- Use the outbox pattern to ensure downstream calls happen exactly once even in the presence of crashes

State machines are particularly valuable here. Before calling an external service, the state check acts as a guard: EMAIL_SENT prevents sending the email again, regardless of whether the email provider supports idempotency keys.

Partial failures and the fundamental distributed systems paradox

Consider this failure sequence:

- Your service receives a payment request with an idempotency key

- The key is not found — this is the first attempt

- The payment is processed and the database commits

- Before the response reaches the client, the network drops the packet

- The client retries with the same idempotency key

- Your service finds the key, returns the stored 200 OK

- The client receives the confirmation

This is the happy path for the unhappy scenario. Idempotency absorbed the retry.

Now consider a harder failure:

- Steps 1–3 succeed: payment processed, database committed

- Before storing the idempotency key, your service crashes

- On restart, there is no stored key

- The client retries — the key is not found — the operation processes again

This is the atomicity failure. The idempotency key must be stored in the same transaction as the operation it guards. If the key store and the operation's database are different systems, you need a distributed transaction or the outbox pattern to bind them together. Most teams choose the outbox.

There is also the philosophical paradox that distributed systems engineers eventually confront: the operation succeeded, the database committed, the payment went through — but the client received no response and believes it failed. The system and the client hold contradictory beliefs about reality. Idempotency doesn't resolve this paradox; it manages it. When the client retries, idempotency gives it the response it needed, closing the loop.

Testing idempotency

Idempotency is invisible when it works, which means it's easy to ship without ever verifying it. Add these to your test suite:

Duplicate request tests. Send the same request twice with the same idempotency key and assert that the response is identical and that exactly one record exists in the database. Run these for every state-changing endpoint.

Concurrent request tests. Send two identical requests simultaneously — the race condition scenario. Assert that only one operation was processed. This requires actually firing concurrent requests, not sequential ones.

Key expiry tests. Verify that an idempotency key that has aged out is treated as a new operation. Verify that a key within the retention window is correctly deduplicated.

Partial failure tests. Simulate a crash between the database write and the key store write. Assert that a retry processes correctly — either the operation runs again safely (because the key was never stored) or the key was stored atomically and the retry returns the cached response.

Consumer idempotency tests. Deliver the same Kafka message twice to your consumer in the same test run. Assert that side effects occur exactly once.

Observability

Because idempotency silently absorbs duplicates, you need instrumentation to know it's working.

Track your retry rate. Count how many requests arrive with an idempotency key you've already seen. A sudden spike indicates either a misbehaving client (stuck retry loop), a network issue causing mass retries, or a bug in your client SDK.

Track key store latency. The idempotency check adds latency to every request. At high volume, a 50ms key lookup can become your bottleneck. Monitor it as you would any database query.

Track key store size. Count unique keys created per time period. Project forward to ensure your cleanup job keeps up with growth. At a billion operations per day with a 30-day retention window, you need storage for 30 billion records.

Log retry correlations. When a retry arrives and matches an existing key, log both the current request ID and the original request ID. This makes it possible to reconstruct what happened during a failure sequence when debugging an incident.

Alert on unexpected new processing. If a request with an idempotency key is processed as new when a duplicate existed but wasn't found, that indicates either a race condition or a key store failure. This should be a high-priority alert.

Where not to apply idempotency

Idempotency has real costs — storage, latency, cleanup jobs, code complexity. Apply it where duplication causes harm, and skip it where it doesn't.

Read operations are already idempotent by nature. GET /orders/123 can be called a thousand times without consequence. No idempotency infrastructure needed.

Analytics and telemetry often tolerate duplicates. A page view counted twice might be acceptable. Know your tolerance before building deduplication infrastructure for every metric pipeline.

Naturally idempotent writes need no extra work. Setting last_seen_at = now() twice is harmless. Updating a configuration value to the same setting is harmless. If the duplicate write produces the same state, you're done.

Low-stakes operations may not justify the complexity. Idempotency for a "mark as read" feature on an internal tool is probably over-engineering. Idempotency for a payment, a payroll job, or a contract generation endpoint is essential.

The cost of data corruption is almost always higher than the cost of idempotency. But the inverse — adding complexity everywhere in the name of correctness — produces systems that are hard to understand and maintain. Apply judgment.

The mental model to carry forward

After working through the patterns and the race conditions and the failure scenarios, one guiding principle emerges:

Idempotency is not about preventing duplicates. It is about ensuring duplicates cause no harm.

This reframes the entire design question. You stop trying to build a perfect duplicate-free system — because you cannot. The network will fail. Brokers will redeliver. Clients will retry. Users will double-click.

These mechanisms are not bugs. They are features. They are what make distributed systems resilient.

Idempotency is the complement to resilience. Retries make systems available. Idempotency makes retries safe. Together, they let you build systems where failure at any layer is recoverable — not by preventing failure, but by ensuring that recovery doesn't corrupt your data.

When you design an endpoint, a consumer, or a job, do not ask "how do I stop duplicates from happening?"

Ask: "If this runs twice, what breaks?"

Then design for that answer.

A system built this way can handle retries, rebalances, timeouts, and crashes without data corruption. Not because duplicates never arrive. But because when they do, they are harmless.

Quick reference

Idempotency is not optional for state-changing operations. It is a core requirement of distributed systems design. Here is what that means in practice.

What the HTTP spec promises (and what it doesn't):

- Idempotent by spec: GET, HEAD, OPTIONS, PUT, DELETE — but only if your implementation honors the contract

- Not idempotent by spec: POST — you must protect it with idempotency keys or design it around client-supplied identifiers

Implementation patterns, from cleanest to most general:

- Natural idempotency via PUT and client-supplied identifiers — no extra infrastructure, just good design

- State machine guards — let the state itself be the record of what has happened

- Unique database constraints on natural business keys — your last line of defense

- Idempotency key tables with unique constraints — the general-purpose solution for POST

- Inbox Pattern — for consumers reading from Kafka, SQS, or any at-least-once broker

- Outbox pattern — for atomic "database write plus downstream call" correctness

Non-negotiable requirements for idempotency keys:

- Store durably — memory is not enough; a delayed retry after a restart will cause a duplicate

- Store atomically with the operation — the key write and the work must succeed or fail together

- Return the original response on duplicate — never return 409 Conflict; the client retried expecting success

- Retain keys for at least your maximum retry window — seven to thirty days is typical

- Let the client generate the key — the server cannot know what is a retry versus a new operation

Failure modes you must test before going to production:

- Concurrent identical requests — the race condition that breaks read-before-write checks

- Crash between operation and key store write — atomicity failure leaves you with no record

- Expired key followed by delayed retry — retention boundary shorter than duplicate window

- Duplicate messages from broker — consumer-side idempotency is not optional with at-least-once delivery

The bottom line: If your system changes state and you have not explicitly designed for idempotency, you have a latent bug. It may surface tomorrow. It may surface next year. But it will surface.

About N Sharma

Lead Architect at StackAndSystemN Sharma is a technologist with over 28 years of experience in software engineering, system architecture, and technology consulting. He holds a Bachelor’s degree in Engineering, a DBF, and an MBA. His work focuses on research-driven technology education—explaining software architecture, system design, and development practices through structured tutorials designed to help engineers build reliable, scalable systems.

Disclaimer

This article is for educational purposes only. Assistance from AI-powered generative tools was taken to format and improve language flow. While we strive for accuracy, this content may contain errors or omissions and should be independently verified.