Learning Paths

Last Updated: April 18, 2026 at 11:30

CQRS in Microservices Explained: When to Separate Reads and Writes, and Why It Matters in Real Systems

A practical, experience-driven guide to CQRS as a spectrum — from simple code separation to event-driven architectures, including trade-offs, failure modes, and real-world evolution patterns

CQRS (Command Query Responsibility Segregation) is often treated as a complex architectural pattern, but in reality it addresses a simple and critical problem: reads and writes in modern systems behave very differently under load and complexity. This article explains CQRS not as a binary design choice, but as a spectrum of increasing separation, from logical code structure to fully event-driven distributed systems. It shows why many production systems become slow or fragile when a single data model is forced to serve both transactional writes and high-volume reads. The deeper insight is that most system failures are not caused by databases being slow, but by misaligned responsibilities between how data is written and how it is consumed.

The real problem

You have an e-commerce system. It works fine. Then something changes. The business asks for a new dashboard — order summaries with customer details, product names, and shipping status, all on a single screen. You write a query joining orders, customers, products, and shipments. It looks harmless in development. In production, with real data, it takes three seconds — and keeps getting worse.

Then something else breaks. Your checkout flow, which used to take 200 milliseconds, now takes 800. You investigate and find the same database is doing two very different jobs: serving real-time transactional writes and running heavy analytical queries for reporting. The reporting workload starts locking tables. The checkout flow starts waiting behind it.

Then the code itself starts to feel fragile. Every API response becomes a stitched-together shape pulled from five different tables. Every change requires touching multiple joins. Developers hesitate before making updates because one small modification might break unrelated queries.

At this point, the problem is no longer performance alone — it is structure.

Here is the observation that quietly opens the door to CQRS: Most systems do not become slow because databases are inherently slow, but because we force one data model to serve two fundamentally different workloads — fast, consistent writes and flexible, high-volume reads.

What if writing data and reading data were allowed to evolve independently? That single question changes how you think about system design.

The core idea

At its heart, CQRS is a way of structuring a system where the responsibility for writing data and the responsibility for reading data are treated as two separate concerns.

The starting point is simple, but it changes how you think about the system. Most applications naturally grow around a single model. The same code base handles creating data, updating it, and also fetching it back for display. Over time, this single model starts to carry two very different kinds of work.

On one side, the system is constantly applying changes. It is validating inputs, enforcing business rules, checking inventory, processing payments, and ensuring that every update keeps the system in a valid state. This is the write side of the system, where correctness matters most, because every operation permanently changes state.

In CQRS, these operations are called commands. A command represents an intention to change something in the system — create an order, update stock, cancel a payment. The focus is on applying the change safely and consistently. Once the change is applied, the system simply acknowledges that it has been done.

On the other side, the system is continuously serving data back to users and services. It is fetching order histories, listing products, showing stock levels, and building screens that represent the current state of the business. This is the read side of the system, where the focus is on returning data quickly and in a shape that is easy to consume.

These operations are called queries. A query exists to retrieve information. It does not change anything in the system; it only reflects what is already there.

When you look closely at these two responsibilities, something important becomes clear. The way data is written and the way data is read often evolve in different directions.

Writing data tends to demand structure and control. The system needs to protect consistency, enforce rules, and ensure that every state transition is valid. This naturally leads to models that are carefully designed around business logic and transactional integrity.

Reading data, on the other hand, tends to be shaped by usage. Data is often needed in combinations that do not match how it is stored. A single screen might require information from multiple entities, pre-joined and pre-calculated so it can be displayed quickly. Over time, read paths start to optimise for performance and convenience rather than strict structure. CQRS builds on this observation and makes it explicit in the system design. It allows the write side and the read side to be structured differently, and to evolve independently. The same underlying business data can exist in different forms depending on whether it is being changed or being consumed.

That is the core idea of CQRS.

And once this separation becomes clear, the next realisation follows naturally. The separation does not exist in a single fixed form. It exists on a spectrum.

The spectrum: how much separation do you actually need?

You do not “use CQRS” or “not use CQRS” as a switch. Systems tend to move toward it gradually, usually in response to pressure — slower queries, growing complexity, or teams stepping on each other’s changes.

Level zero — Traditional CRUD

At this level, everything flows through a single model.

The same application layer handles writing data and reading it back. The same database schema supports both. The same API endpoints are typically used for both kinds of operations, often differentiated only by the HTTP method or request type.

Under the surface, there is no intentional separation between how data is written and how it is read. A single domain model or data structure is responsible for both concerns.

This works well in early systems because the model is still small and simple. The same structure can comfortably represent how data is stored and how it is retrieved, without creating tension between the two.

Level one — Logical separation, same database

At this level, the system starts to recognise that reading and writing behave differently, even if they still operate on the same underlying data.

The database does not change. The schema does not change. What changes is how the application is organised.

Write operations are placed in one part of the codebase — often called commands — and read operations are placed in another — queries. They may be different classes, services, or modules, and they are treated as separate responsibilities in the system design.

So the same data is still being used, but the paths to access it are now deliberately separated.

This separation matters because it changes how developers think about the system. A change in a write operation is no longer automatically tied to how data is read. A query can evolve in its own direction without forcing changes in the write logic. Over time, this reduces accidental coupling inside the codebase, even though the underlying database remains the same.

Level two — Model separation, same database

Now the separation moves deeper.

The write side and read side no longer just live in different parts of the code — they start using different models.

The write model is designed around correctness. It is often normalised, structured around business rules, and focused on maintaining consistency during updates.

The read model is designed around usage. It is shaped by how data is consumed, often denormalised or precomputed so that queries become simpler and faster.

Both still exist in the same database, and they are often kept in sync through controlled updates or transactional boundaries.

Level three — Physical separation, different databases

At this point, the separation becomes architectural.

The write side uses one database, optimised for transactional consistency and correctness. The read side uses another system entirely, chosen for how data is accessed — search engines, caches, or specialised read stores like Elasticsearch or Redis.

Now the system explicitly accepts that reading and writing are different workloads with different storage needs. Synchronisation between them becomes part of the system design.

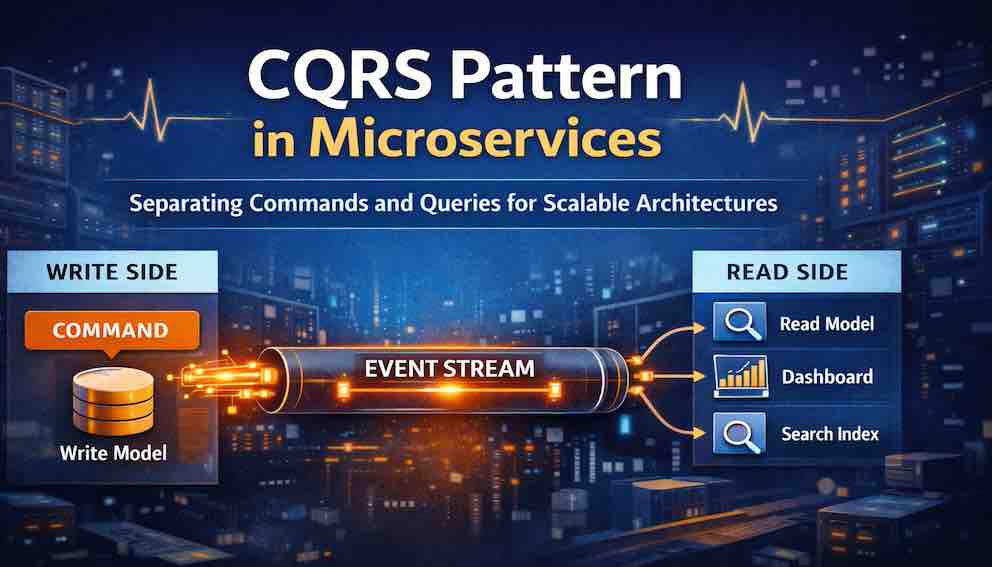

Level four — Event-driven CQRS

At this level, the write side stops directly updating read models.

Instead, it emits events whenever state changes. These events are consumed by separate systems that build and maintain their own read models independently.

The read side is no longer tightly coupled to the write transaction at all. It evolves from a reactive system into an event-driven one.

The trade-off is that reads may not immediately reflect writes. The system becomes eventually consistent, and that behaviour has to be consciously designed for.

This is the most powerful form of CQRS — and also the most operationally demanding.

Most systems never need to go this far. Many gain real value from Level 1 or Level 2 long before they ever introduce events or separate databases.

Why CQRS exists: the deeper motivation

"CQRS is for scalability" is the surface answer. Here is what lies beneath it.

Write model complexity versus read simplicity. The write side of a system is hard. You need validation, business invariants, transaction boundaries, and consistency guarantees. Creating an order requires checking inventory, validating payment, applying discounts, and ensuring the customer exists. The read side is different — you just need to return data quickly. An order summary screen does not need to enforce business rules. It just needs to show what happened. Why punish your writes with read complexity? Why punish your reads with write-optimised schemas?

Read-heavy systems. In most production systems, reads outnumber writes by a significant margin — often 90:10, sometimes 99:1. A traditional normalised schema is designed for write integrity, not read speed. As read volume grows, the joins required by that schema start to hurt. CQRS lets you build a read side designed entirely for reading.

API evolution without fear. Your core domain model for orders has served you well for two years. Now the product team wants a new dashboard with different aggregations and filters. In a traditional approach, you extend the existing model. Add fields. Add joins. Add complexity. Over time, the model becomes bloated, and changing the write model becomes dangerous because reads depend on it. With CQRS, you add a new read model. The write model stays untouched.

Team autonomy. Separate teams can own the read side and write side. The write team focuses on domain logic and correctness. The read team focuses on query performance and API design. They communicate through events or shared interfaces, not through shared code.

A concrete example: e-commerce orders

Without CQRS

You have an orders table and an order items table. Normalised. Clean. To display the order summary page, you join across orders, customers, order items, products, and shipments. It works — until your data grows. The query takes half a second on average, sometimes two seconds under load. Adding a new field means modifying this complex join. You are afraid to change the orders table schema because so many read queries depend on it.

With CQRS (level two)

You create a separate read model: an order summary view. A single denormalised table that contains everything needed for the order summary — customer name, product names, shipping status, totals. Pre-joined. Pre-computed.

The query becomes a single table lookup. No joins. Ten milliseconds.

When an order is created or updated, the write side updates both the normalised orders tables and the denormalised order summary view — in the same transaction. Strong consistency. The read model is always correct.

When you move to level four, the read model updates asynchronously. The write side emits an OrderPlaced event. A background consumer listens for that event and updates the order summary view. The write transaction completes faster. The read model becomes eventually consistent.

The result: query latency drops by an order of magnitude. The write model stays clean. Different teams can own different sides. Adding a new field to the order summary only touches the read model.

Event-driven CQRS: a different kind of system

Level four CQRS is not just more separation. It is a shift in how the system thinks.

Here is the flow. The application receives a PlaceOrder command. The write side validates, checks inventory, processes payment, and creates an order record in the write database. The write side then publishes an OrderPlaced event containing all the data needed to build read models — order details, customer information, product IDs, quantities, amounts.

That event goes to a message broker. And here is where things change.

The write model no longer knows who is reading. It does not know about the dashboard. It does not know about the search index. It does not know about the customer activity feed. It just emits what happened, and any number of consumers can subscribe and build their own views independently.

One consumer updates the order summary view. Another indexes the order in Elasticsearch. Another updates a real-time feed. Each read model is built independently, optimised for its own purpose, owned by its own team.

This is where systems stop being request-driven and start being event-driven. The write side stops dictating what data looks like to readers. Readers take ownership of their own representations.

This architecture relies on patterns that work together:

Outbox pattern ensures events are published reliably. The write side stores events in an outbox table as part of the write transaction, then a publisher sends them to the message broker. This prevents events from being lost if the publish step fails after a successful write.

Saga pattern coordinates distributed transactions across services. When an order is placed, a Saga might coordinate inventory reservation, payment processing, and shipment creation — each step emitting events that update read models.

Inbox pattern ensures events are processed exactly once on the read side, preventing duplicates from corrupting projections.

CQRS does not live in isolation. It is part of an ecosystem.

Eventual consistency: the part you cannot skip

In event-driven CQRS, there is a delay — usually milliseconds, sometimes seconds — between when a command is processed and when the read model reflects the change. A user creates an order and immediately navigates to their order list. The new order is not there yet. The read model has not caught up.

Is that acceptable? It depends entirely on the use case.

For many use cases, it is perfectly fine. An order list that shows new orders within a few seconds meets user expectations. For others — like account balances after a deposit — eventual consistency is not acceptable at all.

When eventual consistency is acceptable, you have several tools for managing the experience:

Read-your-own-writes — When a user performs a write, ensure their immediate subsequent reads reflect it. You can return the updated read model directly from the write operation, or read from the write database for that specific user for a short window.

UI hints — Be transparent. "Your order has been placed. It will appear in your orders list in a moment." Users are forgiving when you set expectations.

Polling or push updates — The UI polls for updates or maintains a WebSocket connection. When the read model catches up, the UI refreshes automatically.

Critical path fallback — For operations where consistency matters, bypass the read model entirely and query the write database directly.

Eventual consistency adds real complexity. You need to design for it, test for it, and make conscious decisions about it for every read model. If you cannot tolerate it, use level two CQRS instead: same database, same transaction, strong consistency.

The trade-offs: what you actually pay

CQRS is not a free lunch.

Data duplication. The same data lives in multiple places. The write model has normalised tables. The read models have denormalised projections. Storage is cheap, but duplication creates more places to update when something changes — and more places where things can drift out of sync.

Operational complexity. You now have more moving parts. Event producers, message brokers, event consumers, projection builders — each can fail, each needs monitoring, each needs its own deployment and scaling strategy. Your runbooks get longer.

Harder debugging. When a user reports a missing order, where do you look? The write database shows the order was created. The event log shows the event was published. The consumer logs show... what exactly? A single missing record might force you to trace through four separate systems — the API, the message broker, the consumer, and the projection database — just to answer "was this event ever processed?" Good observability becomes essential, not optional.

Eventual consistency surprises. Race conditions are subtle. A projection might fall behind during a traffic spike. An event might be processed out of order. These bugs might not appear in testing. They might appear only under specific production conditions, days after a deployment.

More infrastructure. Your write database is PostgreSQL. Your read database for search is Elasticsearch. Your read database for real-time status is Redis. You now operate three data systems, each with its own backup, recovery, monitoring, and maintenance overhead.

The honest conclusion: CQRS is a trade-off. You accept these costs when the benefits — read performance, write isolation, team autonomy, API flexibility — genuinely outweigh them. For many systems, they do not.

When NOT to use CQRS

This section is as important as everything above.

Simple CRUD applications. Your app is mostly create, read, update, delete. No complex business logic. No challenging query patterns. CQRS would add complexity with no measurable benefit.

Low scale. A few thousand users. Your database handles reads and writes without strain. Response times are fine. Solve real problems, not imagined future ones.

Small teams. Few developers, already stretched. Event-driven CQRS means adding message brokers, event consumers, projection builders, and eventual consistency handling. The operational burden will slow you down more than read latency is slowing your users.

No read-write asymmetry. Your reads and writes are similar in complexity and volume. CQRS helps when reads and writes are fundamentally different problems. If they are the same, the pattern gives you nothing.

Simple queries. Your reads are single indexed lookups. Fast. No joins, no aggregations, no full-text search across millions of rows. CQRS shines in complexity. Without it, you are adding infrastructure for no reason.

You are new to microservices. Master database per service first. Master API composition. Master basic event-driven communication. Add CQRS when you have a specific, measurable problem that only CQRS solves.

A quick decision test

Before introducing CQRS, ask yourself three questions:

Does my current read model cause measurable, documented pain — slow queries, database contention, fragile joins that block development?

Have I ruled out simpler solutions — read replicas, better indexes, query caching, materialised views within the existing schema?

Do I have the team and operational maturity to run event-driven infrastructure reliably?

If the answer to any of these is no, keep things simple. CQRS is a tool for a specific problem, not a default architecture.

CQRS versus related patterns

CQRS vs CRUD. CRUD uses the same model for all operations. CQRS separates commands from queries. CRUD is simpler. Start there.

CQRS vs Event Sourcing. This is the most common confusion. They are different patterns that are often used together. Event Sourcing stores the complete history of changes as a sequence of events — the current state is rebuilt by replaying them. CQRS separates read and write models and says nothing about how state is stored internally. You can have CQRS without Event Sourcing (traditional database on the write side, read models derived from it). You can have Event Sourcing without CQRS (replay events to rebuild state, but serve all reads from the same rebuilt model). They work well together but are not the same thing.

CQRS vs Database per Service. Database per Service says each microservice owns its own database. CQRS says reads and writes within a service might use different databases. They are orthogonal — you can apply both, either, or neither.

CQRS vs API Composition. API Composition handles queries that span multiple services by aggregating responses at the API layer. CQRS handles queries within a service by building optimised read models. They complement each other rather than compete.

Failure scenarios: what you need to plan for

CQRS systems tend to fail in ways that are not immediately visible. The system keeps running, APIs respond, databases look healthy — but the data that users see slowly stops matching the data that was written.

These are not dramatic failures. They are quiet ones. And that is what makes them difficult.

When read and write systems drift apart

One of the most common problems appears when the write side and the read side stop agreeing.

An order is created in the write database. Everything looks correct. But when a user opens the order history screen, the order is missing.

There are no obvious errors. The event was published. The consumer service was running. Logs show normal behaviour. Yet somewhere along the chain, the read model failed to update.

This kind of failure is difficult because nothing is obviously broken — only inconsistent.

To handle this, systems need a way to periodically verify alignment between write data and read projections. These reconciliation processes compare both sides and either repair differences or surface them when they exceed a safe threshold.

When events are processed more than once or not at all

Distributed systems rarely deliver messages exactly once in a strict sense. Instead, they tend to guarantee delivery “at least once,” which introduces its own set of challenges.

Sometimes an event is published successfully, but the consumer crashes before processing it. The message is retried, and the same event is processed again.

To make this safe, consumers need to recognise events they have already handled. This usually means storing processed event IDs and ignoring duplicates. This is often referred to as the Inbox pattern.

When events arrive in the wrong order

Another issue appears when events are processed out of sequence.

For example, an update event arrives before the original creation event. The system tries to apply a change to something that does not yet exist in the read model.

This is not rare in distributed systems where messages travel through queues and partitions.

One way to prevent this is to ensure ordering at the messaging layer by partitioning events using the entity ID. Another approach is to design consumers to tolerate partial state and wait until the base entity exists before applying updates.

When projections need to be rebuilt

Read models are often derived structures. That means they can be rebuilt from the source events or from the write database.

But when schema changes or data corruption happens, entire projections may need to be rebuilt.

This sounds simple in theory, but in practice it requires careful design. Projections need to track their progress so they can resume from a known point if a rebuild fails halfway. Otherwise, rebuilding becomes fragile and time-consuming.

This is why good CQRS systems treat projections as rebuildable by design, not as permanent structures.

When contracts between systems evolve

As systems grow, the shape of data changes. New fields are added. Existing fields are modified. Events evolve over time.

Without structure, this can break consumers that are not prepared for newer versions of data.

To avoid this, events need versioning. Consumers need to be able to handle multiple versions of the same event, or systems need a schema contract that ensures backward compatibility before changes are deployed.

Testing CQRS systems

Projection rebuild tests. Feed a known sequence of events into a fresh projection. Compare the resulting state against an expected snapshot. This validates that your projections are correct and that rebuilds are reliable.

Event replay idempotence tests. Feed the same sequence of events twice. The state after the second replay should be identical to the state after the first. If it changes, your projections are not idempotent — they will produce incorrect data when events are redelivered.

Consistency checks. Run scheduled queries that compare write models against read models. An order present in the write database but absent from the read model is a bug. An order total in the read model that does not match the write model is a bug. These checks catch silent drift before users do.

Backfill strategies. When you introduce a new read model, populate it with existing data either by querying the write database directly (simpler, works for smaller datasets) or by replaying historical events from the event log (works for event-sourced systems). Test both paths — the initial backfill and the ongoing event-driven updates.

How to introduce CQRS gradually

You do not need to rewrite everything. You can evolve into it.

Start with the pain point. Find one specific query causing problems. The order summary page that takes three seconds. The reporting dashboard that times out. Start there — not everywhere.

Add read replicas first. If feasible, configure a read replica of your existing database and route the problematic query to the replica. This gives you immediate relief without changing a line of application code. It is not CQRS, but it solves many read problems cheaply.

Introduce a denormalised read model. For the specific problematic query, create a denormalised table designed for that query and update it within the same write transaction. Keep the old query working. Switch the API to use the new read model when you are confident. This is level two CQRS — safe, consistent, and dramatically faster.

Move to asynchronous updates. Once the denormalised model is working, consider decoupling it from the write transaction with events. Write transactions get faster. The read model becomes eventually consistent. Move only when the benefits justify the complexity.

Expand to other pain points. Repeat the process for other slow queries. Over time, you accumulate a set of read models. The write model stays clean throughout.

What not to do: introduce CQRS everywhere at once. Rewrite your entire data access layer before proving the pattern works. Force event-driven CQRS onto simple queries that work fine already.

The mental model to carry forward

Reading and writing are not the same problem.

The write side cares about correctness: validation, invariants, transaction boundaries, consistency. It should be normalised, careful, and conservative.

The read side cares about speed and experience: fast responses, flexible formats, data shaped for the consumer. It should be denormalised, optimised, and possibly eventually consistent.

Most of the pain you feel in data-heavy systems comes from pretending these are the same problem — forcing one model to serve two fundamentally different purposes. CQRS is simply the moment you stop pretending.

Start simple. Add separation only where you feel real pain. Evolve gradually. And remember: CQRS is a spectrum, not a destination.

Summary

The real problem — Read-heavy systems choking on write-optimised schemas. Complex joins slowing APIs. Write models too fragile to change because reads depend on them.

The core idea — Commands change state. Queries read state. They can use different models, different code paths, different databases.

CQRS is a spectrum — From logical separation in the same database to event-driven projections across multiple systems. Choose the level your problem actually requires.

Event-driven CQRS — The write side emits events. The read side consumes them and builds independent projections. The write model stops knowing what readers need. Connects to the Outbox, Saga, and Inbox patterns.

Eventual consistency — Read models lag behind writes. Design for it: read-your-own-writes, UI hints, polling, or fallback to the write database for critical paths.

Trade-offs are real — Data duplication, operational complexity, subtle eventual consistency bugs, more infrastructure, harder debugging. These costs are genuine.

When NOT to use CQRS — Simple CRUD, low scale, small teams, no read-write asymmetry, no complex queries, early in your microservices journey.

CQRS vs Event Sourcing — Different patterns, often combined. CQRS separates read and write models. Event Sourcing stores complete change history.

Failure scenarios — Silent drift between write and read models, duplicated events, out-of-order processing, rebuild failures, schema evolution. Each has a solution, and each solution adds complexity.

Evolution strategy — Start with read replicas. Introduce denormalised models for specific pain points. Move to async event-driven updates gradually. Expand only when you have proven the pattern works.

The final model — Reads and writes are fundamentally different problems. Give each the system it deserves. Most production pain in data systems comes from pretending otherwise.

About N Sharma

Lead Architect at StackAndSystemN Sharma is a technologist with over 28 years of experience in software engineering, system architecture, and technology consulting. He holds a Bachelor’s degree in Engineering, a DBF, and an MBA. His work focuses on research-driven technology education—explaining software architecture, system design, and development practices through structured tutorials designed to help engineers build reliable, scalable systems.

Disclaimer

This article is for educational purposes only. Assistance from AI-powered generative tools was taken to format and improve language flow. While we strive for accuracy, this content may contain errors or omissions and should be independently verified.