Learning Paths

Last Updated: March 18, 2026 at 17:30

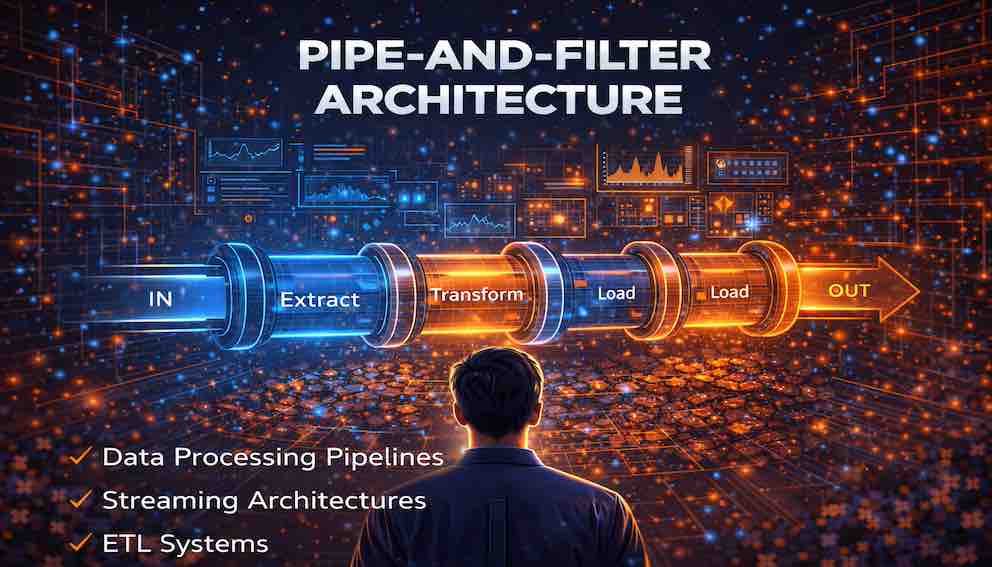

Pipe-and-Filter Architecture: Data Processing Pipelines, Streaming Systems, and the Foundations of ETL Workflows

Understanding how independent processing stages connected by data channels enable modular, scalable, and reusable data transformation pipelines

Pipe-and-filter architecture organizes systems as sequences of independent processing stages connected by data channels. This tutorial explores how filters and pipes work together, why they dominate streaming platforms and ETL workflows, and the practical challenges architects face when building production pipelines at scale.

Introduction

Some systems exist for one fundamental reason: to transform data step by step. Consider a video platform transcoding uploads into multiple formats, a fraud detection system evaluating transactions as they occur, or an analytics pipeline turning raw events into business insights. In all these cases, raw data enters one end, and something useful emerges from the other.

This is where pipe-and-filter architecture shines. Unlike the architectures we've explored elsewhere in this series—which focus on service boundaries, deployment units, or communication patterns—pipe-and-filter focuses on the shape of data as it flows through a system. It's the architecture of assembly lines, of Unix commands chained together, of ETL workflows that move data from operational databases into warehouses, and of streaming platforms processing millions of events per second.

What Is Pipe-and-Filter Architecture?

Pipe-and-filter architecture organizes a system as a series of independent data-processing stages connected by data channels. The two building blocks are elegantly simple:

Filters perform transformations on data. Each filter focuses on one specific processing step—it receives input, transforms it according to its responsibility, and produces output for the next stage.

Pipes transport data between filters. A pipe carries the output of one filter to the input of the next, acting as both a buffer and a decoupling mechanism.

A conceptual pipeline looks like this:

Raw Data → Filter 1 (Parsing) → Filter 2 (Validation) → Filter 3 (Enrichment) → Filter 4 (Aggregation) → Final Output

The architecture encourages modular data processing, reusable transformation components, and flexible pipeline construction. Stages can be added, removed, or reordered without touching their neighbors.

Think of an assembly line in a factory. Each station performs a specific task while the product gradually evolves as it moves forward. The worker at each station doesn't need the full picture—they just do their job and pass the result along.

A Deeper Look: Filters

Filters are the processing units, and they come in four broad categories based on the work they perform.

Data source filters generate or ingest data into the pipeline. They might read from files, databases, message queues, or network connections, and they are typically the first stage.

Transformer filters modify data in some way. Common transformations include cleaning malformed records, validating against rules, converting between formats, enriching records with external data, dropping unwanted records, and splitting or merging streams.

Aggregator filters combine multiple inputs into summary forms. Examples include counting events over time windows, computing rolling averages, or grouping records by category.

Data sink filters write processed data to external systems—databases, files, or downstream services. A sink is typically the last stage.

Characteristics of Well-Designed Filters

A well-designed filter has several key properties. It has single responsibility—a parser parses; it doesn't also aggregate. This makes each filter easier to understand, test, and reuse.

Filters are independent. They don't depend directly on other filters—only on the shape of the data they receive. You can replace, upgrade, or scale a filter without touching its neighbors.

The best filters are data-agnostic. A JSON schema validator doesn't care what the JSON represents—only that it's valid. This maximizes reusability across different pipelines.

Filters should be stateless when possible. Stateless filters process each record independently without memory of what came before. When state is necessary—as in aggregators—it should be managed explicitly and carefully.

Example: A Simple Log Parsing Filter

Consider a filter that parses raw log lines into structured records.

Input:

The filter applies a regular expression to extract the timestamp, event type, user ID, and device type.

Output:

This filter does exactly one thing: convert raw text into structured data. It parses and passes the result forward.

Pipes: The Connectors Between Filters

Pipes transport data between filters. Depending on the system, a pipe might be implemented as an in-memory queue for filters running in the same process, a message broker like Apache Kafka or RabbitMQ for distributed pipelines, files or object storage for batch-oriented systems, or a streaming platform that acts as both the pipes and the execution environment.

The exact implementation matters less than the concept: pipes decouple filters from one another.

Why Decoupling Matters

Because a filter only knows about the data coming in and going out—not about what produced it or what will consume it—several powerful capabilities emerge.

Filters can be distributed across machines, data centers, or different cloud providers. The pipe abstracts away the communication mechanism.

Pipelines can be reconfigured. You can insert a new filter between two existing ones without modifying either. You can swap out a slow filter for a faster one without touching anything else.

Different stages can scale independently. If parsing becomes a bottleneck, you can run multiple instances of the parsing filter all consuming from the same input pipe, without any changes to upstream or downstream stages.

Think of a pipe like a conveyor belt in a factory. One worker places completed work on the belt; another picks it up at the next station. If you replace the first worker with a robot, the second worker doesn't notice. If you add an inspection station, you just insert a new segment of belt. The belt makes the system flexible.

The Power of Composition

One of the most powerful properties of pipe-and-filter architecture is that pipelines can themselves become filters within larger pipelines.

Imagine you have three pipelines: one that cleans raw data, one that enriches cleaned data with reference information, and one that aggregates enriched data into metrics. Each is internally composed of multiple filters. But from the outside, each pipeline looks like a single logical transformation. You can compose them into a larger pipeline:

Raw Data → Cleaning Pipeline → Enrichment Pipeline → Aggregation Pipeline → Results

This hierarchical composition allows systems to grow in complexity while maintaining modularity. It's exactly how Unix shell pipes work—you can chain grep, sort, uniq, and wc -l together, but you can also wrap that whole chain in a shell script and use the script as a single component in an even larger pipeline.

Real-World Applications

A Log Processing Pipeline

Consider a platform that processes millions of server logs every day. Raw logs are rarely ready for analytics—they need to pass through several transformation stages.

A typical pipeline might look like this:

Raw Logs → Parse Log Format → Remove Invalid Records → Enrich with User Data → Aggregate Statistics → Store Analytics Results

Filter 1—Log Parsing converts raw text lines into structured records.

Filter 2—Data Validation removes entries with missing required fields, malformed timestamps, or out-of-range values. It routes invalid records to a separate error log rather than silently dropping them.

Filter 3—Data Enrichment adds context to each event: geographic location from the IP address, account details from a user database, or product names resolved from product IDs.

Filter 4—Aggregation computes metrics like logins per hour or most active regions, typically over time windows.

Filter 5—Storage writes the processed results to an analytics database or data warehouse.

If a new requirement arrives—say, detecting suspicious login patterns—you simply insert a new fraud detection filter after enrichment. The existing filters continue doing exactly what they were doing, passing data forward. This is the practical benefit of keeping filters independent.

Streaming Architectures

Modern data platforms increasingly process continuous streams rather than periodic batches. In streaming systems, data flows through the pipeline as events occur. Examples include real-time analytics platforms, financial transaction monitoring, IoT sensor data processing, and recommendation engines.

In these systems, the pipe-and-filter model maps naturally onto the architecture. The streaming platform acts as the pipes, while individual processing components act as the filters.

A real-time fraud detection pipeline might look like:

Payment Event Stream → Message Validation → Transaction Enrichment → Rule-Based Checks → ML Model Scoring → Alert Routing → Fraud Investigation Team

Data flows continuously, allowing the system to respond within milliseconds of an event. Modern frameworks like Apache Kafka Streams, Apache Flink, and Apache Spark Structured Streaming implement pipe-and-filter concepts at scale, providing exactly-once processing semantics, built-in state management, fault tolerance, and automatic scaling across clusters.

ETL Systems

One of the most common real-world implementations of pipe-and-filter architecture is the ETL pipeline. ETL stands for Extract, Transform, Load. Pipelines extract data from source systems, transform it through cleaning and enrichment stages, and load it into target systems like data warehouses.

Consider an e-commerce company analyzing sales performance across multiple sources: order records from the transaction database, customer profiles from the CRM system, product catalog from inventory, and website clickstream events. Different sources follow different cleaning paths before converging at join and aggregation stages.

Because each transformation is an isolated filter, engineers can modify customer normalization logic without touching order validation. They can add a new data source without redesigning the pipeline. They can rerun a single stage after fixing a bug. This modularity is why pipe-and-filter remains the dominant architecture in data engineering.

Advantages of Pipe-and-Filter Architecture

Modularity. Each filter has a single responsibility, making pipelines easier to understand and maintain. When a bug appears, you can identify the relevant filter quickly.

Reusability. Filters often perform generic transformations that appear across many pipelines. A timestamp normalizer or JSON validator can be written once and reused across the organization.

Parallel processing. Multiple filters can execute simultaneously. While Filter 3 processes batch 100, Filter 2 processes batch 101 and Filter 1 works on batch 102. This pipelining significantly improves throughput.

Flexibility. Pipelines evolve easily. New filters slot in between existing ones without redesign. Stages can be reordered. An existing filter can be replaced with an improved version.

Testability. Because filters have clearly defined inputs and outputs, they can be unit tested in isolation. Feed a filter known inputs, verify the outputs, and you're done.

Independent scalability. Bottleneck stages can scale without affecting others. If enrichment is slow, you run more instances of the enrichment filter against the same input pipe.

Composability. Pipelines themselves can act as filters within larger pipelines, letting systems grow to arbitrary complexity while maintaining modularity.

Limitations and Challenges

Data format compatibility. Every filter must agree on the data format flowing through the pipes. If one filter changes its output schema, downstream filters may crash or produce wrong results. Good strategies include using a schema registry, defining explicit interface contracts, and preferring evolvable serialization formats like Apache Avro or Protocol Buffers.

Accumulated latency. Each filter adds processing time, and data waits in each pipe. A pipeline with many stages will have higher end-to-end latency than a simpler one.

Error handling. Failures can occur anywhere. Architects must design for retrying failed steps, isolating malformed records so they don't block the pipeline, and deciding whether partial failures should halt processing or continue with reduced functionality.

Back-pressure. If a downstream filter is slower than the filter feeding it, data accumulates. Left unmanaged, this can exhaust memory. Back-pressure mechanisms allow slow components to signal upstream components to slow down.

State management. Aggregation filters need to remember what they've seen. In distributed, fault-tolerant pipelines this raises questions about where state is stored, how it's recovered after crashes, and how it's partitioned across multiple instances.

Data lineage and debugging. When a downstream report shows incorrect numbers, which filter introduced the error? Embedding correlation IDs in records and logging which filters processed each record helps but requires deliberate design.

Observability. With many moving parts, you need to monitor processing rates, error rates, queue depths, and latency at each stage. Without good observability, problems accumulate unnoticed.

When Pipe-and-Filter Architecture Fits

This style works best when a system's primary job is transforming data through a sequence of stages.

Strong fits include data processing pipelines, ETL workflows, streaming analytics, media processing, log processing, compiler and interpreter design, and scientific computing workflows with multiple analysis steps.

Poor fits include interactive user interfaces, simple request-response APIs, systems requiring complex bidirectional communication, use cases with very strict latency requirements where each stage's overhead matters, and straightforward CRUD applications where a pipeline adds complexity without benefit.

Designing Effective Pipelines

Define clear data contracts. The schema flowing through each pipe is the most important design decision. Version schemas carefully. Breaking changes require coordinated updates across all downstream filters.

Keep filters focused. If a filter is doing two things, split it. Single-responsibility filters are easier to test, reuse, and replace.

Design for statelessness where possible. Stateless filters are trivially scalable and recoverable. When state is unavoidable, use a framework that manages it reliably.

Handle failures gracefully. Design each filter to handle errors without crashing the pipeline. Route unprocessable records to a dead letter queue. Implement retry logic with exponential backoff for transient failures.

Monitor everything. Instrument each filter with metrics for throughput, error rate, and latency. Track queue depths. Alert on anomalies before they become outages.

Test filters independently. Unit test each filter with representative inputs, including edge cases and malformed data. Run integration tests on the assembled pipeline to catch problems that only appear when filters interact.

Conclusion: The Elegance of Pipelines

Pipe-and-filter architecture organizes software as data processing pipelines: independent components connected by channels, each performing a specific transformation, none knowing more than it needs to.

This design is modular, reusable, scalable, flexible, and testable. Unlike architectures that focus on deployment structures or service boundaries, pipe-and-filter focuses on the shape of data as it flows through a system. It's the architecture of assembly lines, of Unix pipes, of ETL workflows, and of streaming analytics at global scale.

It is one of the oldest and most enduring patterns in software design, precisely because it maps so naturally onto a problem that never goes away: raw data arrives, and useful information must come out the other side. Understanding when to reach for this pattern—recognizing that a system is fundamentally about transforming data through a sequence of steps—is an important and underrated skill in software architecture.

Key Takeaways

Pipe-and-filter architecture organizes systems as independent processing stages connected by data channels. Filters perform specific transformations—they are modular, focused, and independent of one another. Pipes transport data between filters, decoupling stages and providing flexibility to reconfigure, scale, or replace individual components.

Well-designed filters have a single responsibility, are stateless when possible, and depend only on the format of the data they receive. The architecture's advantages include modularity, reusability, parallel throughput, flexibility, testability, and independent scalability. Its challenges include schema compatibility, accumulated latency, error handling, back-pressure management, state management, data lineage tracking, and observability.

Real-world examples span ETL pipelines, streaming analytics, media processing, log aggregation, compiler design, and Unix shell pipes. The style fits best when a system's primary job is sequential data transformation and fits poorly for interactive UIs, simple CRUD operations, or microsecond-latency requirements. Modern stream processing frameworks implement these concepts at scale with built-in fault tolerance, state management, and exactly-once semantics.

About N Sharma

Lead Architect at StackAndSystemN Sharma is a technologist with over 28 years of experience in software engineering, system architecture, and technology consulting. He holds a Bachelor’s degree in Engineering, a DBF, and an MBA. His work focuses on research-driven technology education—explaining software architecture, system design, and development practices through structured tutorials designed to help engineers build reliable, scalable systems.

Disclaimer

This article is for educational purposes only. Assistance from AI-powered generative tools was taken to format and improve language flow. While we strive for accuracy, this content may contain errors or omissions and should be independently verified.