Learning Paths

Last Updated: May 2, 2026 at 13:00

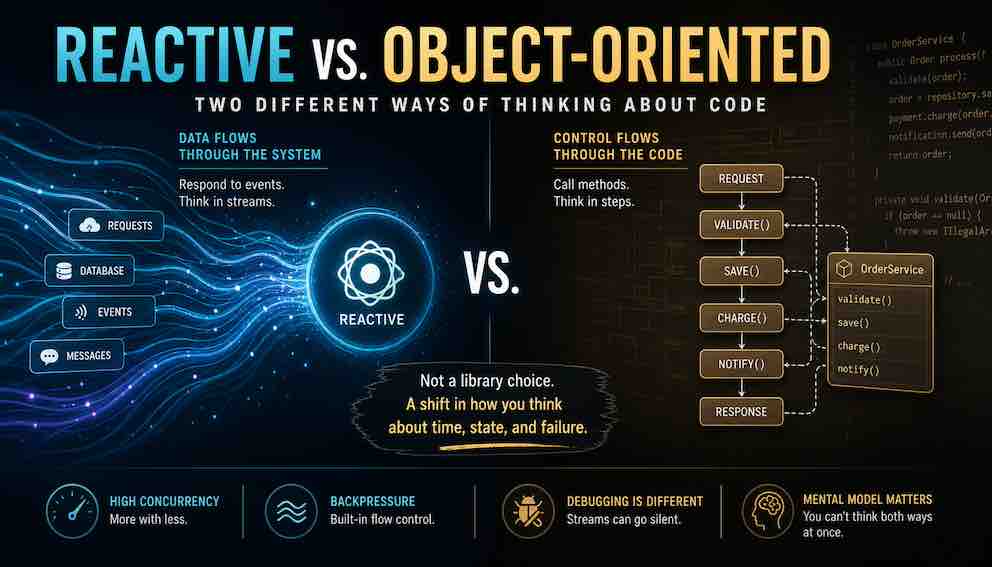

Reactive vs. Object-Oriented: Two Different Ways of Thinking About Code

Why choosing reactive programming is not a library decision — it is an irreversible shift in how you think about time, state, and failure

Most teams adopt reactive programming for the throughput gains and only later discover they have also inherited a completely different mental model for debugging, state management, and error handling — one that cannot coexist peacefully with object-oriented thinking in the same developer's head at two in the morning. This article explains how reactive works, what it costs, where it quietly goes wrong, and why the question is never "is reactive better?" but always "what problem am I actually trying to solve?"

Lets start with a story. A team had built a perfectly respectable e-commerce backend using Spring MVC and traditional object-oriented practices. Everything worked fine. Then Black Friday came, and their thread pool fell over. Not because the database was slow or the code was inefficient, but because every concurrent request held a thread while waiting for downstream responses. The hardware was fine. The network was fine. But the thread-based concurrency model had hit its limit.

Someone on the team had watched a conference talk about Spring WebFlux. It promised non-blocking concurrency and better throughput under load. They spent two months rewriting critical paths to use reactive streams. When they deployed, the system handled the load beautifully. Then a subtle bug appeared. Orders would occasionally disappear from the confirmation page, only to show up again fifteen minutes later in the admin panel.

Debugging took four days. The culprit was a single missing subscribe() call in a complex reactor chain that silently dropped errors instead of logging them. One line. Four days.

That team learned something that no conference talk had warned them about. Reactive programming was not just a different library or syntax. It demanded a different way of thinking about time, state, and debugging. They had underestimated the mental model shift, and it cost them.

Let us understand why.

What these approaches actually mean

Object-oriented programming, the style most of us learned first, thinks in terms of control flow. You have objects with methods. You call a method. It does something, perhaps calls other methods, then returns. The path your program takes is a sequence of explicit steps. When you debug, you set a breakpoint and step through line by line. The program moves because you tell it to move.

Reactive programming flips this. Instead of control flow, you think in data flow. Instead of calling methods, you declare transformations on streams of events. Instead of telling the program when to do something, you describe what should happen whenever data arrives. The program then reacts to data as it comes.

Here is the most important distinction. In object-oriented programming, you pull data by calling methods. In reactive programming, data pushes itself to you.

Consider this with a concrete example. In OOP, you might write:

You are reaching out and pulling data into your variable. Execution stops right there until the data arrives. In reactive, you write something closer to:

You are not pulling anything. You are saying "when orders arrive, display them" and then you move on. The data will come to you when it is ready.

This difference seems small. In practice, it changes everything about how you debug, how you think about errors, and how you organize your application.

Why debugging reactive code feels so strange

This is where the real cost of reactive becomes visible.

In OOP, when something goes wrong, you ask a sequence of questions. What method was I in? What line are we on? What were the arguments? What called this method? You can recreate the entire timeline of your program by looking at the call stack and the current state of objects. Step-through debugging works beautifully because execution follows a single path through time.

In reactive, you cannot do this. Your program is not a single path. It is a graph of transformations that data flows through, potentially arriving from multiple sources at unpredictable times. When the orders started disappearing, that team could not set a breakpoint on a line number and step through. The error was not happening on a line. It was happening in a stream that had gone silent.

When something breaks in reactive code, you do not ask "what line are we on?" You ask "which stream emitted an error, and what were the last ten values that flowed through these five transformations before it broke?" That is a fundamentally different question. Answering it requires different tools.

Stack traces become less useful in reactive systems because the error often surfaces in a subscriber far away from the original source. A database error inside a flatMap operator produces a stack trace that shows reactor internals rather than your business logic. The context of how data arrived gets lost unless you explicitly carry it forward.

The team eventually learned to debug by adding logging operators into their stream chains, printing values at each stage, and building mental timelines from the logs. They used doOnNext in Reactor to peek at values without transforming them. They added error logging before their error recovery logic. This worked — but a debugging session that would take ten minutes in OOP took two hours in reactive.

Most developers who struggle with reactive are not struggling with the operators. They are struggling with the fact that their intuitions about program execution no longer apply.

How state thinking changes

The second major shift involves how you think about state.

Before we talk about reactive programming's approach to state, let's make sure we agree on what "state" even means. State is just data that can change over time. A counter that goes from 0 to 1 to 2 is state. A username that a user can update is state. Whether a button is disabled or enabled is state.

In the kind of code most developers learn first — object-oriented programming — state lives inside objects as variables. You have a counter object. It has a number stored inside it. You call a method to increment it. You read the variable whenever you need the current value. It is direct and intuitive: the data sits somewhere, you go and get it, you change it when you need to.

Reactive programming asks you to think about this completely differently. Instead of storing a value and changing it directly, you describe a stream of events over time and derive the current value from those events.

Think of it like a bank account statement versus a current balance. Your bank doesn't just store "you have £500." It stores every transaction — deposit £200, withdraw £50, deposit £350 — and your balance is calculated from that history. Reactive programming thinks like the transaction log, not the balance field. Nothing is stored directly. The current value emerges from the events that have flowed through the stream.

This feels awkward at first, and that's normal. The awkwardness is actually a useful signal. If you find yourself constantly fighting to grab the current value of a stream as a one-off snapshot — the way you'd just read a variable — reactive is working against you in that part of your code. That's often a sign you're using the wrong tool there.

Hot and cold observables: the concept that catches everyone out

This is the idea that most developers from an OOP background have never encountered, because there's simply no equivalent in that world. Understanding it is what separates people who use reactive programming confidently from people who use it and occasionally get mysterious bugs.

In reactive programming, a stream can behave in two very different ways depending on whether it is cold or hot.

A cold observable starts fresh for every subscriber.

Imagine a Netflix show. Every person who clicks play watches it from episode one. You watching it has no effect on somebody else watching it. Everyone gets their own independent copy, starting from the beginning.

An HTTP request works this way in reactive programming. Every time a new piece of your application starts listening to it, a brand new network request goes out. If three parts of your app are all listening to the same HTTP stream, three separate requests fire. Most developers find this deeply surprising the first time they discover it.

A hot observable is already running, regardless of who is listening.

Imagine a live radio station. It broadcasts continuously. If you tune in at 9pm, you hear whatever is playing right now. You don't get to rewind to this morning's programme. You joined a stream that was already in progress, and anything that played before you arrived is simply gone.

A WebSocket connection works this way. The server is pushing events continuously. When your code starts listening, it receives events from that moment forward. Anything that arrived before you started listening is already gone — there's no going back.

Why this caused the missing orders bug

The team had a stream of incoming orders. Two separate parts of the UI needed to react to each new order: the confirmation screen and the dashboard.

They wired both up to the same stream. What they didn't realise was that their stream was cold — meaning each listener got its own independent execution of the stream rather than sharing a single one. The confirmation screen started listening first and saw the order arrive. The dashboard started listening a fraction of a second later. Because the stream was cold, it created a completely fresh execution for the dashboard — but the order event had already passed through the first execution. The dashboard's version never saw it. The order appeared to vanish.

In OOP, this problem literally cannot exist. If you have a list of orders and two functions both read from it, they both get the same list. There is no concept of reading the list consuming the data. The data just sits there until you explicitly change it.

The reactive fix is to make the stream hot — specifically to make it multicast, meaning it runs once and broadcasts to all listeners simultaneously, rather than spinning up a separate execution for each one. With that change, both the confirmation screen and the dashboard receive the same order event from the same single execution, and the bug disappears.

The real difference in how you fight bugs

In OOP, the state problems you fight are about mutation. Two pieces of code change the same variable at the same time and corrupt each other's work. You solve this with careful access control, with making data immutable, with being deliberate about who is allowed to change what.

In reactive programming, the state problems you fight are different in character. You worry about whether your stream is hot or cold. You worry about whether a listener that joined late missed data it needed. You worry about accidentally triggering duplicate side effects because two parts of your app are both listening to a cold stream that fires a network request for each of them.

Neither paradigm is free of complexity. The complexity just moves to a different place — and the bugs that come from not understanding where it moved tend to be subtle, timing-dependent, and genuinely confusing until the concept finally clicks.

Error handling: where streams go silent

In OOP, the model is familiar and bounded. You wrap risky code, catch the exception, and decide what to do. The error travels up the call stack until something handles it, and if nothing does, it surfaces loudly — in your logs, in your thread, on your screen.

The error handling lives immediately next to the code that can fail. There is no distance between cause and response.

In reactive, errors terminate streams by default — and they do so silently. If your pipeline encounters an error and you have not explicitly handled it, that stream stops emitting values permanently. No exception propagates anywhere. No log entry appears unless you added one. The stream just goes silent. This is exactly what happened to that team in our story. A database timeout inside a flatMap terminated the stream, and because nothing handled the error signal, the system simply stopped showing orders.

The reactive equivalent requires you to handle errors as part of the transformation chain itself:

Notice that onErrorResume must be placed precisely — after the WebClient call but before the map. Move it outside the flatMap and it no longer catches pricing failures specifically; it catches everything, including database errors you may want to handle differently. The error handling is expressive, but its meaning changes depending on where in the chain it sits. That positional sensitivity is what catches teams off guard.

The deeper trap is retry. If you attach .retry(3) to recover from transient failures, and the failing operation has a side effect — a database write, an audit log entry, a payment charge — that side effect can execute multiple times before the stream stabilizes. In OOP, you control retry in a loop and can guard against this explicitly. In reactive, the operator retries the entire upstream chain, and it does so without telling you which operations already succeeded.

This is the point of no return for many teams. Not because reactive error handling is inadequate — the operators are genuinely expressive once you know them — but because the failure modes are silent and positional in ways that OOP developers are not trained to expect. A missing try-catch throws an exception and wakes someone up. A missing onErrorResume produces four days of confusion over why orders are disappearing.

Where reactive actually wins

After all these warnings, you might wonder why anyone chooses reactive programming at all. The answer lies in two problems that OOP handles poorly at scale: concurrency efficiency and backpressure.

Object-oriented programs typically handle concurrency with threads — one thread per task. This works fine until you have ten thousand concurrent tasks, at which point your memory is exhausted and the system crashes. Thread pools help, but then tasks queue waiting for available threads. Callbacks help, but produce deeply nested, difficult-to-read code. Each approach carries real trade-offs.

Reactive programming solves this by using small, fixed thread pools combined with a non-blocking execution model. Instead of blocking a thread while waiting for a database query or HTTP response, reactive streams release the thread to do other work. The thread only executes when data is actually available to process.

Consider a web server handling API requests. In OOP with blocking I/O, each request occupies a thread from arrival to response. If that request makes three database queries and two HTTP calls, the thread sits idle waiting for network responses for most of its life. In reactive with non-blocking I/O, while one request waits for the database, the same thread can handle dozens of others.

There is a subtlety here worth naming. Reactive can also mask latency problems rather than expose them. Non-blocking systems are excellent at keeping throughput high under concurrent load, but they can hide individual request latency spikes. A reactive system handling ten thousand concurrent requests might show healthy aggregate throughput even as individual requests degrade quietly in the background. If your performance regressions are latency-based rather than throughput-based, reactive's observability tools are less intuitive than profiling a blocked thread.

The second genuine win is backpressure. Imagine a fast producer sending data faster than a slow consumer can process — a WebSocket feed pushing stock prices faster than your screen can render them. In OOP, you implement bounded queues and rejection policies manually. In reactive, backpressure is built into the specification. The consumer signals how much data it can handle; the producer respects that demand. No manual queue management. It just works, provided both ends implement the reactive streams protocol properly.

What makes this genuinely achievable today — and not merely theoretical — is that the ecosystem has caught up. Five years ago, adopting reactive often meant your WebFlux controller was non-blocking while your database driver quietly blocked a thread underneath it, negating much of the benefit. That gap has largely closed. Spring's WebClient replaced RestTemplate with a fully non-blocking HTTP client. R2DBC provides reactive relational database drivers for Postgres, MySQL, and others. Reactive MongoRepository gives you non-blocking document access without leaving the Spring Data model you already know. Kafka's reactive consumer support, Redis's reactive client via Lettuce, and reactive drivers for Cassandra and Elasticsearch mean that a genuinely end-to-end non-blocking pipeline — from inbound HTTP request through service calls to database and back — is now a real architectural option, not an aspiration. When every layer speaks the same reactive streams protocol, the efficiency gains compound rather than cancel out at each boundary. Here is what that looks like in practice — a Spring WebFlux order handler that calls an external pricing service, enriches the result from MongoDB, and handles failure, without blocking a thread at any point in the chain:

Notice what is absent: no thread blocking, no get() calls forcing synchronous waits, no try-catch wrapped around I/O. The entire flow — database read, HTTP call, transformation, fallback, logging — is declared as a single composed pipeline. The thread handling this request is released the moment it hits the first non-blocking operation, free to serve other requests until data arrives at each stage. At high concurrency, this is the difference between needing fifty threads and needing five.

This combination of efficient concurrency and built-in backpressure makes reactive the right choice when concurrency is your actual bottleneck: high-throughput API gateways, real-time data pipelines, chat applications, event-driven systems with many concurrent operations waiting on external resources.

One thing to notice about the code above. Everything lives in the controller layer. That is fine for an example, but in a real system you should follow proper layering just as you would in a non‑reactive application. Keep your controllers thin. Push business logic into services. Keep your DAO/Client layer. Reactive does not change that rule.

Where reactive struggles

Let us be be concrete about failure.

A team rewrite a working CRUD application in WebFlux because they wanted to be modern. The application handled customer records with straightforward validation rules, database inserts, and occasional email notifications. It had never had a performance problem. The OOP version was clear, maintainable, and had been in production for two years without incident.

The reactive rewrite took three months. Validation logic that was six lines of if statements became chains of filter and flatMap operators. Error handling that was simple try-catch became retry specifications and fallback streams. Complexity increased by a factor of five — not because the problem was hard, but because the reactive style was a poor match for the domain.

And the system did not get faster. It was never bottlenecked by concurrency to begin with. The team had added enormous complexity to solve a problem they did not have.

Six months later, the two developers who understood the reactive code had moved on, and the team had to revert the OOP version because nobody could debug the stream chains anymore.

Reactive may be the wrong choice for systems where business rule complexity dominates over coordination complexity. CRUD applications with modest traffic, internal admin panels, batch jobs, any system where the primary difficulty is expressing domain logic rather than managing concurrent operations. For these systems, object-oriented programming with blocking I/O remains simpler to write, simpler to debug, and dramatically faster to evolve. A competent team can build and ship an OOP CRUD application in the time a reactive team spends figuring out why their stream stopped emitting values because they forgot to subscribe.

Reactive also struggles in teams not already grounded in functional programming. The operator model — understanding the difference between flatMap, switchMap, and concatMap — requires a grasp of higher-order functions and composition. Teaching these concepts while also delivering production features creates real and sustained tension.

How real systems actually work

Here is the nuance worth noting. Real production systems are rarely pure OOP or pure reactive. The most successful ones use each style where it genuinely fits.

The pattern that has emerged from years of building production systems is this: use object-oriented programming for your domain logic and reactive programming at your integration edges.

Your business rules, your validations, your complex state transitions — these remain in OOP because clarity matters most there. But when you need to handle many concurrent connections, stream data from message brokers, or build real-time feeds, you use reactive at the boundaries.

This works because you can bridge between the worlds. A reactive controller can call an OOP service, receive a domain object, and wrap it in a Mono or Flux for reactive consumers(e,g, ReactJS API call is a reactive consumer). Your domain code does not need to become reactive. It just needs to adapt its results at the edges. Conversely, a reactive stream can accumulate values into a collection and hand that collection off to an OOP service for business logic processing.

The key insight is this: reactive is not a programming style for your domain. It is a wiring style for your system. You apply it where data moves across boundaries or where concurrency pressure demands it. You keep OOP where business logic lives. The two coexist because they solve genuinely different problems.

When to choose and when to walk away

Reactive programming is not a default performance upgrade — it is an architectural choice about how asynchronous work is composed.

It is appropriate when the system is dominated by non-blocking flows: database calls, network requests, caches, and external services that must be coordinated without tying up threads. It fits naturally when the problem is stream-shaped — real-time dashboards, WebSockets, server-sent events, or message-driven pipelines — where data is continuously flowing rather than being requested once.

But reactive design only pays off when the cost of waiting becomes structural, not incidental.

It becomes a poor fit when the real constraints are elsewhere: business logic complexity, debugging needs, or team familiarity. In these systems, reactive abstractions often obscure control flow more than they improve performance. A stack trace becomes harder to follow. An incident becomes harder to reconstruct. Onboarding slows down while operators multiply.

There is a pattern that repeats across systems that adopt reactive too early. The decision is rarely driven by profiling. It is driven by perception — “the system feels slow” or “we need non-blocking everywhere.” After months of migration, teams sometimes discover the original bottleneck was a missing index, a chatty API, or inefficient serialization.

The rule is simple but unforgiving: profile before you abstract. If concurrency or blocking I/O is truly the limiting factor, reactive design can be justified. If not, it is complexity you are paying for without a structural gain.

The mental model you cannot undo

You cannot think both ways at once.

Object-oriented programming thinks about state at a moment in time. Reactive thinks about state over time. Both are valid mental models. But they are not compatible in the same debugging session. When you go reactive, you are not just adopting a new library. You are committing to a new way of reasoning about your program — one that takes time to internalize and that changes how you read code, how you write tests, and how you explain failures to the person on call next to you.

That team with the missing orders eventually succeeded with reactive. They kept it at their integration layer, used it for the high-throughput order submission pipeline, and left their admin dashboard in OOP where clarity mattered more. The architecture worked. But it worked because they stopped trying to hold both models at once, and made a deliberate choice about which one owned each part of the system.

Reactive and OOP are not competing tools. They are incompatible ways of thinking about time. You can use both in a system — but in production, at two in the morning, you can only debug in one of them. Make sure you have chosen deliberately, and that your team knows which world they are standing in.

About N Sharma

Lead Architect at StackAndSystemN Sharma is a technologist with over 28 years of experience in software engineering, system architecture, and technology consulting. He holds a Bachelor’s degree in Engineering, a DBF, and an MBA. His work focuses on research-driven technology education—explaining software architecture, system design, and development practices through structured tutorials designed to help engineers build reliable, scalable systems.

Disclaimer

This article is for educational purposes only. Assistance from AI-powered generative tools was taken to format and improve language flow. While we strive for accuracy, this content may contain errors or omissions and should be independently verified.